Benchmark list of smartphone and tablet SoCs sorted by performance. Geekbench 4.0 64 Bit Single-Core Score. Octane V2 Total Score. If you need help you can follow our guide on how to jailbreak iOS 8.4 on your iPhone, iPad or iPod touch using the TaiG 2.2.0 jailbreak. How to Jailbreak iOS 8.4 using TaiG 2.2.0 Jailbreak. Let us know how it goes in the comments below.

It’s been ages since the Mac mini received an update, so we can see how fans of Apple’s smallest Mac would be happy for any improvements. On the flipside, because the mini hasn’t been updated for four long years, you may have convinced yourself that Apple would make dramatic changes—yet the update is pretty much limited to a processor upgrade.

If you were anticipating a major overhaul, your disappointment is understandable. But get over it, because the new Mac mini is a worthy Mac for $799. In fact, in our benchmarks, its performance is fast enough to give the iMac some competition. If you’re buying a new Mac, you should definitely consider the Mac mini, because you could end up saving some money—and still get a soild, fast Mac.

And if you own an older Mac mini and love the form factor, you’ll want to upgrade. The performance—especially with multi-core professional software—is worth the money. This review takes a look at the $799 Mac mini, which is now Apple’s cheapest Mac. Who is the Mac mini for? The Mac mini made its, and was marketed as the affordable entry-point for Mac newcomers.

All it needed was an external display (the first mini came with a VGA-to-DVI adapter) and USB input devices. With the base model priced at $499, it lagged behind Apple’s faster, more expensive Macs, but it was a good performer for the price. Dan Masaoka/IDG The Mac mini has proven to be popular with general consumers and demanding professionals alike. But as it turned out, the Mac mini found a market with pro users thanks to its small footprint. It’s been popular with software developers and content creators, and has even found a home in co-location data centers.

In response, Apple changed its Mac mini message, targeting professionals and touting the mini’s performance instead of its affordability. Apple’s Mac mini website calls the new Mac “All workhorse” and the whole “switcher” messaging of the original Mac mini is gone.

But that doesn’t mean the mini is no longer for switchers and everyone else. It’s still a good Mac for the general consumer, and in fact, it offers considerable bang for the buck. The main drawback is that there’s no longer a sub-$500 price tag in Apple’s Mac lineup (though the $799 Mac mini is $300 cheaper than the entry-level 21.5-inch iMac). Inside the Mac mini: CPU, SSD, RAM, T2 During a briefing, Apple told us that faster Mac mini performance was at the top of customers’ wishlists.

With that in mind, Apple upgraded the CPU with eighth-generation Intel Core processors—desktop CPUs, not mobile CPUs. Apple says the new Mac mini is up to five times faster than the previous one (which, after all, was released in October 2014).

The CPU in the $799 Mac mini is a 3.6GHz Core i5. This is a quad-core processor that offers two more processing cores than the chip in the previous Mac mini. Note that this particular Mac mini’s CPU doesn’t support Turbo Boost, a feature that allows for a processor to run faster than its stated frequency if the processor is running under its limits for power, current, and temperature. However, Turbo Boost up to 4.1GHz is available in the 3.0GHz 6-core Core i5 processor that’s spec’d for the $1,099 Mac mini.

You’ll also find a performance upgrade in the Mac mini’s storage hardware—sort of. In the past, you could choose from a hard drive (slow but spacious), a Fusion Drive (the capacity of a hard drive but with better speed), or flash storage (a fast and pricey solid-state drive). Now, Apple offers only solid-state drives, which offer the best speed.

The $799 model comes with a 128GB drive, but if that isn’t enough, Apple offers upgrades all the way up to 2TB if you’re willing to pay. The SSDs are PCI-e cards and Apple doesn’t consider them user-upgradeable. So, if you prefer to house your storage inside the computer instead of attaching an external drive, you might consider shelling out more money for an upgrade.

Dan Masaoka/IDG The plastic bottom cap pops off, but then you’ll find an aluminum hatch held in place by six torx screws. And when you get the hatch off, you’ll find that the insides are not readily user accessible. The $799 Mac mini comes standard with 8GB of 2666MHz DDR4 memory, installed as a pair of 4GB SO-DIMMs. The mini supports a maximum of 64GB, and you can upgrade the memory later, but Apple doesn’t consider the Mac mini to be user-configurable, and it recommends that memory upgrades be performed by a certified Apple service provider. Doing it on your own will void the warranty. You can easily open up the Mac mini on your own: The circular plastic cap at the bottom of the Mac mini pops off to unveil an aluminum hatch that’s kept in place with torx screws. But what you’ll find when you remove the hatch is that the memory is placed in a sort of a cage, and that you’ll need to remove the fan and other components to get access.

It’s not a trivial task. The Mac mini includes a T2 Security Chip to offload security features away from the main CPU. The T2 handles the Mac mini’s secure boot feature and storage encryption for FileVault, and it houses a coprocessor for Secure Enclave, which operates Touch ID. Unfortunately, there currently isn’t any keyboard with Touch ID support that can be attached to the Mac mini. That said, the iMac is due for an update soon, so maybe we’ll see a new Magic Keyboard with Touch ID when that desktop machine arrives. How fast is the Mac mini? To test the speed of the $799 Mac mini, we used the benchmark tool.

We compared the Mac mini’s results to the three Mac mini models from 2014, the current $1,499 iMac, and the 2013 3.5GHz 6-Core Xeon E5 Mac Pro. Geekbench 64-bit Single-Core test results IDG Higher scores/longer bars indicate better performance. Click to enlarge. Not surprisingly, the $799 Mac mini, with its eighth-generation 3.6GHz Core i3 processor, offers a nice single-core boost over its 2014 predecessors. We saw a 29 percent jump over the $999 2.8GHz Core i5 model, a 34 percent boost over the $699 2.6GHz Core i5 model, and a 45 percent improvement over the $499 1.4GHz Core i5 Mac mini.

The question is, Are these improvements enough for owners of the 2014 Mac mini to upgrade? Even in single-core apps (e.g., mail, browsers, iTunes, and even some consumer-level video and image editors), the boost is significant, thanks to eighth-generation Intel chip improvements and the clock speed bump. So, if you have a 2014-vintage $499 or $699 Mac mini, you’ve probably gotten your money’s worth from the machine, and upgrading to the new $799 model is a good investment. And even if you bought the 2014 $999 model, upgrading should be a serious consideration. Interestingly, the single-core performance of the $799 Mac mini isn’t far off from the 2017 21.5-inch 3.4GHz Core i5 iMac that sells for $1,499. The iMac is only 4 percent faster. Another interesting data point: The new Mac mini outperforms the 2013 3.5GHz Xeon E5 Mac Pro by 23 percent.

Keep in mind that this is in single-core performance, and the Mac mini versus Mac Pro story changes in our next suite of tests. Geekbench 64-bit Multi-Core test results IDG Higher scores/longer bars indicate better performance. Click to enlarge. Because Apple has changed the marketing message with the new Mac mini, its multi-core performance will draw more attention than before.

The $799 Mac mini has four processing cores, two more than in the previous models. So the newer CPU and extra processing cores combine to make the $799 Mac mini a mighty machine for apps that can use multiple cores (pro-level video and image editors, as well as developer tools, for example). In the Geekbench 64-bit Multi-Core test, the $799 Mac mini more than doubled the performance of the three older models. Bottom line: If you use apps that can take advantage of multiple cores, you’re going to see a huge speed increase with the new Mac mini. It’s well worth the cost of upgrading. When you compare the $799 Mac mini to the $1,499 21.5-inch iMac with a quad-core 3.4GHz Core i5, you’ll find an eye-opening result: the Geekbench 4 scores are practically the same.

We ran a few more benchmarks to compare the $799 Mac mini to the $1,499 iMac, and we found that when it comes to graphics performance, the iMac and its 4GB Radeon Pro 560 graphics card gives it a significant edge in frame-rate performance over the Mac mini’s Intel UHD Graphics 630. But in two other benchmarks—the test and the render test—the Mac mini and the iMac finished neck and neck. The Mac mini, however, is slower than the 2013 6-core 3.5GHz Xeon E5 Mac Pro, which is five years old and costs $2,299.

Still, when you consider the price difference, the Mac mini’s multi-core speed is impressive. Connectivity and ports on the new Mac mini One of the reasons the Mac mini has been such a beloved machine among Mac users is that it comes with so many ports in such a small package.

Fortunately, it still has a lot of ports, but Apple has updated the equipment to match its current philosophy, which currently focuses on Thunderbolt/USB-C. The Mac mini comes with four Thunderbolt/USB-C ports, and you can connect two or three displays through these ports, depending on the screen resolution used for each display. If you don’t have a USB-C equipped display like the, you will need an adapter (we have a to help you find the ones you need).

You can also connect an HDMI-equipped display to the Mac mini’s HDMI 2.0 port. Dan Masaoka The 2018 Mac mini’s rear ports. Got more than two USB-A devices?

You’ll need to buy a hub. If you have a lot of USB-A devices to connect, you’ll be disappointed in the reduction of USB-A ports from four down to two. But if you don’t use all of the Mac mini’s USB-C ports and you want to connect a USB-A device, you can use an USB-C to USB-A adapter, like the. An even better investment would be a USB hub, such as the, which connects to the Mac mini via USB-A, or the, which connects via USB-C.

For networking, the Mac mini comes standard with a gigabit ethernet jack and Wi-Fi. Apple does offer a $100 upgrade to 10Gb ethernet, which will be of interest to pro users, render farm implementations, and more. The Mac mini also has Bluetooth 5.0 and a headphone jack. Same Mac mini design as before The long gap between updates lent itself to speculation, with Apple fans compiling wish lists for the new Mac mini. Macworld writers and editors certainly haven’t been on the.

Much of the speculation focused on the Mac mini’s form factor, and many of us thought the new machine could be smaller than the 2014 model, an idea inspired by small PC devices, such as the and even. But in the end, Apple decided not to change the Mac mini’s design at all, except for now it’s in space gray instead of silver. It’s the same shape and size as before, a square with sides measuring 7.7 inches, a height of 1.4 inches, and rounded corners. You can stack it on top of the previous Mac mini, and it lines up perfectly. Like the, the Mac mini’s case is made of 100 percent recycled aluminum. MacStadium co-location center uses thousands of Mac minis.

It looks like installations like this one influenced Apple’s decision to stick with the Mac mini design. Probably the main reason why Apple stuck with the design can be seen in “” feature that the company published during the Mac mini announcement. Among other clever uses for the Mac mini, we see them in a co-location data center where 8,000 Mac minis are deployed. The photo of MacStadium’s facility is impressive, with row after row of Mac minis, but could you imagine what you’d have to do to replace all those old minis to accommodate a new form factor?

It certainly may discourage upgrading the machines in enterprise environments. Perhaps it would be nice if the Mac mini were smaller, lending itself to even more uses, but it seems Apple determined a footprint reduction wasn’t a priority. For a majority of people, the Mac mini’s size works, and the new Mac mini can simply slide into the space of the old one, no muss, no fuss. Bottom line There are customers who lament the fact that Apple no longer offers a Mac for under $500, and that Apple went from offering three desktop Macs for under $1,000 to just one. But this is the new reality: $799 is the new entry point, and it’s not going to go any lower. That being said, at $799, the 3.6GHz quad-core Core i3 Mac mini offers an intriguing combination of performance and value.

In many instances—especially with multi-core apps—the Mac mini is as fast as the current $1,499 21.5-inch 3.4GHz quad-core Core i5 iMac (which was released in 2017). You could you decide to buy a refurbished 4K display and input devices with a $799 Mac mini instead of a $1,499 iMac, and you’ll save a little bit of money while getting comparable performance. Whether you should upgrade from the previous Mac mini is a no-brainer: Do it. If you use apps that can take advantage of multiple cores, you’ll see a huge improvement that’s well worth the cost. Even if you don’t use multi-core apps and use only consumer-level software, you’ll see a marked improvement in speed. You may have to buy a USB hub and a video adapter, but it’s worth it.

September 29, 2012 This article has had almost 30,000 views. Thanks for reading it. When I wrote this article over a year ago, most people believed mobile benchmarks were a strong indicator of device performance. Since then a lot has happened: Both and were caught cheating and some of the most popular benchmarks are no longer used by leading bloggers because they are too easy to game.

By now almost every mobile OEM has figured out how to “game” popular benchmarks including 3DMark, AnTuTu, Vellamo 2 and others. The iPhone hasn’t been called out yet, but, so there is a high probability they are employing one or more of the techniques described below like driver tricks.

Although Samsung and the Galaxy Note 3 have received a bad rap over this, the actual impact on their benchmark results was fairly small, because. Even when it comes to the Samsung CPU cheats, this time around the performance deltas were only 0-5%. 11/26/13 Update: 3DMark just delisted mobile devices with suspicious benchmark scores. 2/1/17 Update: XDA just accused Chinese phone manufacturers of cheating on benchmarks. You can read the full article. Mobile benchmarks are supposed to make it easier to compare smartphones and tablets. In theory, the higher the score, the better the performance.

You might have heard the iPhone 5 beats the Samsung Galaxy S III in. It’s also true the Galaxy S III in other benchmarks, but what does this really mean?

And more importantly, can benchmarks really tell us which phone is better than another? Why Mobile Benchmarks Are Almost Meaningless. Benchmarks can easily be gamed – Manufacturers want the highest possible benchmark scores and are willing to cheat to get them.

Sometimes this is done by optimizing code so it favors a certain benchmark. In this case, the optimization results in a higher benchmark score, but has no impact on real-world performance. Other times, manufacturers cheat by tweaking drivers to, lower the quality to improve performance or to other areas. The bottom line is that almost all benchmarks can be gamed. Computer graphics card makers found this out a long time ago and there are many well-documented accounts of, and cheating to improve their scores.Here’s an example of this type of cheating: Samsung created a white list for Exynos 5-based Galaxy S4 phones which allow some of the most popular benchmarking apps to shift into a high-performance mode not available to most applications. These apps run the GPU at 532MHz, while other apps cannot exceed 480MHz. This cheat was, who is the most respected name in both PC and mobile benchmarking.

“the maximum GPU frequency is lowered to 480MHz for certain gaming apps that may cause an overload, when they are used for a prolonged period of time in full-screen mode,” but it doesn’t make sense that S Browser, Gallery, Camera and the Video Player apps can all run with the GPU wide open, but that all games are forced to run at a much lower speed.Samsung isn’t the only manufacturer accused of cheating. Back in June shouted at the top of their lungs about the results of an that claimed their Atom processor outperformed ARM chips by Nvidia, Qualcomm and Samsung. This raised quite a few eyebrows and showed the Intel processor was not completely executing all of the instructions. After released an updated version of the benchmark,. Was this really cheating? You can decide for yourself — but it’s hard to believe Intel didn’t know their chip was bypassing large portions of the tests AnTuTu was running.

It’s also possible to fake benchmark scores as in.Intel has even gone so far as to create their own suite of benchmarks that they admit favor Intel processors. You won’t find the word “Intel” anywhere on the, but if you check the small print on some you’ll find they admit “Intel is a sponsor and member of the BenchmarkXPRT Development Community, and was the major developer of the XPRT family of benchmarks.” Intel also says “Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors.” Bottom line: Intel made these benchmarks to make Intel processors look good and others look bad. Benchmarks measure performance without considering power consumption – Benchmarks were first created for desktop PCs. These PC were always plugged into the wall, had multiple fans and large heat-sinks to dissipate the massive amounts of power they consumed.

The mobile world couldn’t be more different. Your phone is rarely plugged into the wall — even when you are gaming. Your mobile device is also very limited on the amount of heat it can dissipate and battery life drops as heat increases. It doesn’t matter if your mobile device is capable of incredible benchmark scores if your battery dies in only an hour or two.

Mobile benchmarks don’t factor in the power needed to achieve a certain level of performance. That’s a huge oversight, because the best chip manufacturers spend incredible amounts of time optimizing power usage. Even though one processor might slightly underperform another in a benchmark, it could be far superior, because it consumed half the power of the other chip. You’d have no way to know this without expensive hardware capable of performing this type of measurements. Benchmarks rarely predict real-world performance — Many benchmarks favor graphics performance and have little bearing on the things real consumers do with their phones. For example, no one watches hundreds of polygons draw on their screens, but that’s exactly the types of things benchmarks do. Even mobile gamers are unlikely to see increased performance on devices which score higher, because most popular games don’t stress the CPU and GPU the same way benchmarks do.

Benchmarks like focus on things like high-level 3D animations. One reviewer recently said, “Apple’s A6 has an edge in polygon performance and that may be important for ultra-high resolution games, but I have yet to see many of those. Most games that I’ve tried on both platforms run in lower resolution with an up-scaling.” For more on this topic, scroll down to the section titled: “Case Study 2: Is the iPhone 5 Really Twice as Fast?”. For example, The iPhone 5s only starts up only 1 second faster than the iPhone 5 (23 seconds vs.

The iPhone 5s only loads the Reddit.com site 0.1 seconds faster than the iPhone 5. These differences are so small it’s unlikely anyone would even notice them.

Would you believe the iPhone 4 shuts down five times faster than the iPhone 5s? It’s true (4 seconds vs. 21.6 seconds). Another video shows that even though the iPhone 5s does better on most graphics benchmarks, when it comes to real world things like scrolling a webpage in the Chrome browser, Android devices scroll significantly faster than a iPhone 5s running iOS 7.See for yourself in. The iPhone 5s appears to do well on graphics benchmarks until you realize that Android phones have almost 3x the pixels.

Some benchmarks penalize devices with more pixels — Most graphic benchmarks measure performance in terms of frames per second. GFXBench (formerly GLBenchmark) is the most popular graphics benchmark. Apple has dominated in the scores of this benchmark for one simple reason. Apple’s iPhone 4, 4S, 5 and 5s displays all have a fraction of the pixels flagship Android devices have. For example, in the chart above, the iPhone 5s gets a score of 53 fps, while the LG G2 gets a score of 47 fps.

Most people would be impressed by the fact that the iPhone 5s got a score that was 12.7% higher than the LG G2, but when you consider the fact the LG G2 is pushing almost 3x the pixels (2073600 pixels vs. 727040 pixels), it’s clear the Adreno 330 GPU in the LG G2 is actually killing the GPU in the iPhone 5s. The GFXBench scores on the 720p Moto X (shown above) are further proof that what I am saying is true.

This bias against devices with more pixels isn’t just true with GFXBench, you can see the same behavior with graphics benchmarks like Basemark X shown below (where the Moto X beats the Nexus 4). More proof that graphics benchmarks favor devices with lower-res displays. Some popular benchmarks are no longer relevant — is a popular JavaScript benchmark that was designed to compare different browsers. However, according to at least one, the data that SunSpider uses is a small enough benchmark that it’s become more of a cache test. That’s one reason why Google came out with their and benchmark suites, both are better JavaScript tests than SunSpider.” According to Google, Octane is based upon a set of well-known web applications and libraries.

This means, “a high score in the new benchmark directly translates to better and smoother performance in similar web applications.” Even though it may no longer be relevant as an indicator of Java-script browsing performance, SunSpider is still quoted by many bloggers. SunSpider isn’t the only popular benchmark with issues, says also has problems.

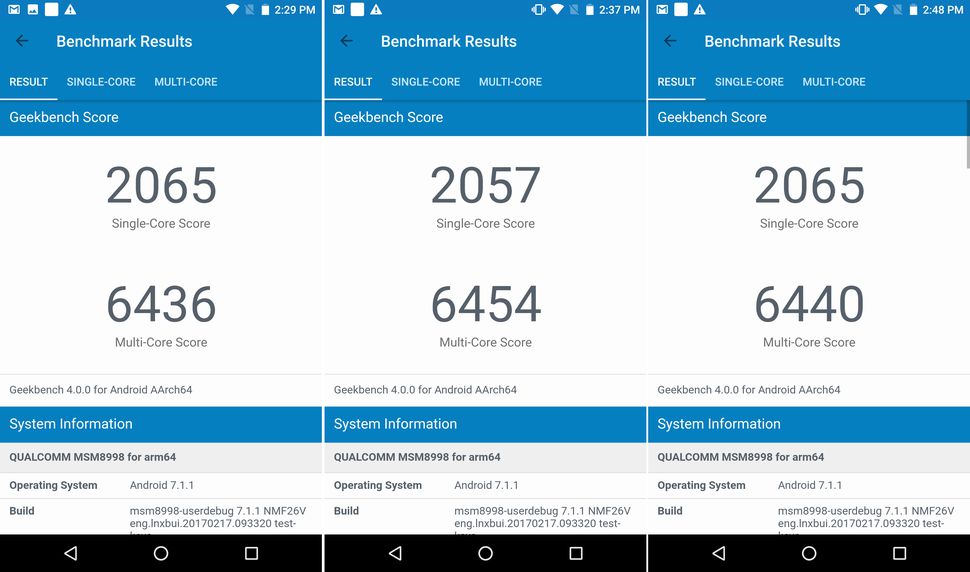

SunSpider is a good example of a benchmark which may no longer be relevant — yet people continue to use it. Benchmark scores are not always repeatable – In theory, you should be able to run the same benchmark on the same phone and get the same results over and over, but this doesn’t always occur.

If you run a benchmark immediately after a reboot and then run the same benchmark during heavy use, you’ll get different results. Even if you reboot every time before you benchmark, you’ll still get different scores due to memory allocation, caching, memory fragmentation, OS house-keeping and other factors like throttling.Another reason you’ll get different scores on devices running exactly the same mobile processors and operating system is because different devices have different apps running in the background. For example, Nexus devices have far less apps running in the background than a non-Nexus carrier-issued devices.

Even after you close all running apps, there are still apps running in the background that you can’t see — yet these apps are consuming system resources and can have an affect on benchmark scores. Some apps run automatically to perform housekeeping for a short period and then close.

The number and types of apps vary greatly from phone to phone and platform to platform, so this makes objective testing of one phone against another difficult.Benchmark scores sometimes change after you upgrade a device to a new operating system. This makes it difficult to compare two devices running different versions of the same OS., the Samsung Galaxy S III running Android 4.0 gets a Geekbench score of 1560, which the same exact phone running Android 4.1 gets Geekbench score of 1781. That’s a 14% increase. The Android 4.4 OS causes many benchmark scores to increase, but not in all cases.

For example, after moving to Android 4.4, Vellamo 2 scores drop significantly on some devices because it can’t make use of some aspects of hardware acceleration due to Google’s changes. Perhaps the biggest reason benchmark scores change over time is because they stress the processor increasing its temperature.

When the processor temperature reaches a certain level, the device starts to throttle or reduce power. This is one of the reasons scores on benchmarks like AnTuTu change when they are run consecutive times.

Other benchmarks have the same problem. In, the person testing several phones gets a Quadrant Standard score on the Nexus 4 that is 4569 on the first run and 4826 on a second run (skip to 14:25 to view). Not all mobile benchmarks are cross-platform — Many mobile benchmarks are Android-only and can’t help you to compare an Android phone to the iPhone 5. Here are just a few popular mobile benchmarks which are not available for iOS and other mobile platforms (e.g., and ). Some benchmarks are not yet 64-bit — Android 5.0 supports 64-bit apps, but most benchmarks do not run in 64-bit mode yet. There are a few exceptions to this rule.

A few Java-based benchmarks (Linpack, Quadrant) run in 64-bit mode and do see performance benefits on systems with 64-bit OS and processors. AnTuTu also supports 64-bit. Mobile benchmarks are not time-tested — Most mobile benchmarks are relatively new and not as mature as the benchmarks which are used to test Macs and PCs. The best computer benchmarks are real world, relevant and produce repeatable scores. There is some encouraging news in this area however — now that 3DMark is available for mobile devices. It would be nice if someone ported other time-tested benchmarks like SPECint to iOS as well.

Existing benchmarks don’t accurate measure the impact of memory speed or throughput. Inaccurate measurement of the heterogenous nature of mobile devices — Only 15% of a mobile processor is the CPU. Modern mobile processors also have DSPs, image processing cores, sensor cores, audio and video decoding cores, and more, but not one of today’s mobile benchmarks can measure any of this. This is a big problem. Case Study 1: Is the New iPad Air Really 2-5x as Fast As Other iPads?

There have been a lot of articles lately about the benchmark performance of the new iPad Air. The writers of these article truly believe that the iPad Air is dramatically faster than any other iPad, but most real world tests don’t show this to be true. Compares 5 generations of iPads. Benchmark tests suggest the iPad Air should be much faster than previous iPads Results of side-by-side s between the iPad Air and other iPads:. Test 1 – Start Up – iPad Air started up 5.73 seconds faster than the iPad 1. That’s 23% faster, yet the Geekbench 3 benchmark suggests the iPad Air should be over than an iPad 2.

I would expect the iPad Air would be more than 23% faster than a product that came out 3 years and 6 months ago. Wouldn’t you?.

Test 2 – Page load times – The narrator claims the iPad Air’s new MIMO antennas are part of the reason the new iPad Air loads webpages so much faster. First off, MIMO antennas are not new in mobile devices; They were in the Kindle HD two generations ago. Second, apparently Apple’s MIMO implementation isn’t effective, because if you freeze frame the video just before 1:00, you’ll see the iPad 4 clearly loads all of the text on the page before the iPad Air. All of the images on the webpage load on the iPad 4 and the iPad Air at exactly the same time – even though browser-based benchmarks suggest the iPad Air should load web pages much faster.

Test 3 – Video Playback – On the video playback test, the iPad Air was no more than 15.3% faster than the iPad 4 (3.65s vs. 4.31s) Reality: Although most benchmarks suggest the iPad Air should be 2-5x faster than older iPads, at best, the iPad Air is only 15-25% faster than the iPad 4 in real world usage, and is some cases it is no faster. Final Thoughts You should never make a purchasing decision based on benchmarks alone. Most popular benchmarks are flawed because they don’t predict real world performance and they don’t take into consideration power consumption. They measure your mobile device in a way that you never use it: running all-out while it’s plugged into the wall.

It doesn’t matter how fast your mobile device can operate if your battery only lasts an hour. For the reason but they still continue to propagate the myth that benchmarks are an indicator of real world performance. They claim they use them because they aren’t subjective, but then them mislead their readers about their often meaningless nature.

Some benchmarks do have their place however. Even though they are far from perfect they can be useful if you understand their limitations. However you shouldn’t read too much into them. They are just one indicator, along with product specs and side-by-side real world comparisons between different mobile devices. Bloggers should spend more time measuring things that actually matter like start-up and shutdown times, Wi-Fi and mobile network speeds in controlled reproducible environments, game responsiveness, app launch times, browser page load times, task switching times, actual power consumption on standardized tasks, touch-panel response times, camera response times, audio playback quality (S/N, distortion, etc.), video frame rates and other things that are related to the ways you use your device. Although most of today’s mobile benchmarks are flawed, there is some hope for the future.

Broadcom, Huawei, OPPO, Samsung Electronics and Spreadtrum recently announced the formation of MobileBench, a new industry consortium formed to provide more effective hardware and system-level performance assessment of mobile devices. They have a proposal for a new benchmark that is supposed to address some of the issues I’ve highlighted above. You can read more about this.

A Mobile Benchmark Primer If you are wondering which benchmarks are the best, and which should not be used, should be of use. Benchmarks like this one suggest the iPhone 5 is twice as fast as the iPhone 4S.

Case Study 2: Is the iPhone 5 Really Twice as Fast? Note: Although this section was written about the iPhone 5, this section applies equally to the iPhone 5s. Like the iPhone 5, experts say the iPhone 5s is twice as fast in some areas — yet most users will notice little if any differences that are related to hardware alone. The biggest differences are related to changes in iOS 7 and the new registers in the A7. Apple and most tech writers believe the iPhone 5’s A6 processor is twice as fast as the chip in the iPhone 4S.

Benchmarks like the one in the above chart support these claims. Tests these claims. In tests like this one, the iPhone 4S beats the iPhone 5 when benchmarks suggest it should be twice as slow.

Results of side-by-side comparisons between the iPhone 5 to the iPhone 4S:. Opening the Facebook app is faster on the iPhone 4S (skip to 7:49 to see this). The iPhone 4S also recognizes speech much faster, although the iPhone 5 returns the results to a query faster (skip to 8:43 to see this).

In a second test, the iPhone 4S once again beats the iPhone 5 in speech recognition and almost ties it in returning the answer to a math problem (skip to 9:01 to see this). App launches times vary, in some cases iPhone 5 wins, in others the iPhone 4S wins.

The iPhone 4S beats the iPhone 5 easily when SpeedTest is run (skip to 10:32 to see this). The iPhone 5 does load web pages and games faster than the iPhone 4S, but it’s no where near twice as fast (skip to 12:56 on the video to see this). I found a few other comparison videos like, which show similar results.